Invisible usage

Teams are already using AI tools faster than policy can keep pace. Most organisations do not know what is approved, improvised, or entirely unknown.

The only platform that gives enterprises AI governance without surveillance.

Full visibility. Real-time policy enforcement. Zero content surveillance.

C.L.A.R.A. never sees prompts, responses, page content, clipboard text, or file contents.

Approved, unknown, and blocked activity resolved into clean governance events.

Approved and emerging AI use resolved into policy state.

Each event retains tool, outcome, timing, and severity. Readable content never leaves the browser.

They surface in security reviews, budget meetings, compliance discussions, and board questions. Waiting does not simplify them.

Teams are already using AI tools faster than policy can keep pace. Most organisations do not know what is approved, improvised, or entirely unknown.

Code, credentials, customer data, and internal references can reach public tools in seconds unless policy works where the behaviour actually happens.

Approved licences, duplicate tools, and unmanaged experimentation fragment budgets. Finance needs clear visibility into which usage is strategic and which is drift.

Leaders are asked what controls exist, how policy is enforced, and what evidence can be produced. Informal answers do not survive formal review.

C.L.A.R.A. is delivered as a lightweight browser companion managed by IT and a cloud-native multi-tenant admin dashboard. Detection happens locally. Governance happens with structured telemetry.

Managed deployment through enterprise IT with minimal user friction.

Policy-aware matching runs in-browser and discards readable content.

Central governance, evidence trails, and role-specific dashboard views.

Roll out a lightweight extension through standard enterprise controls. C.L.A.R.A. starts surfacing AI usage and policy events without demanding workflow changes from employees.

All pattern matching runs locally inside the browser. C.L.A.R.A. identifies risky behaviours and tool usage, applies policy, and sends only structured event metadata to the backend.

Security, IT, compliance, and leadership teams receive the visibility they need: discovered tools, policy outcomes, adoption posture, and defensible governance records across the organisation.

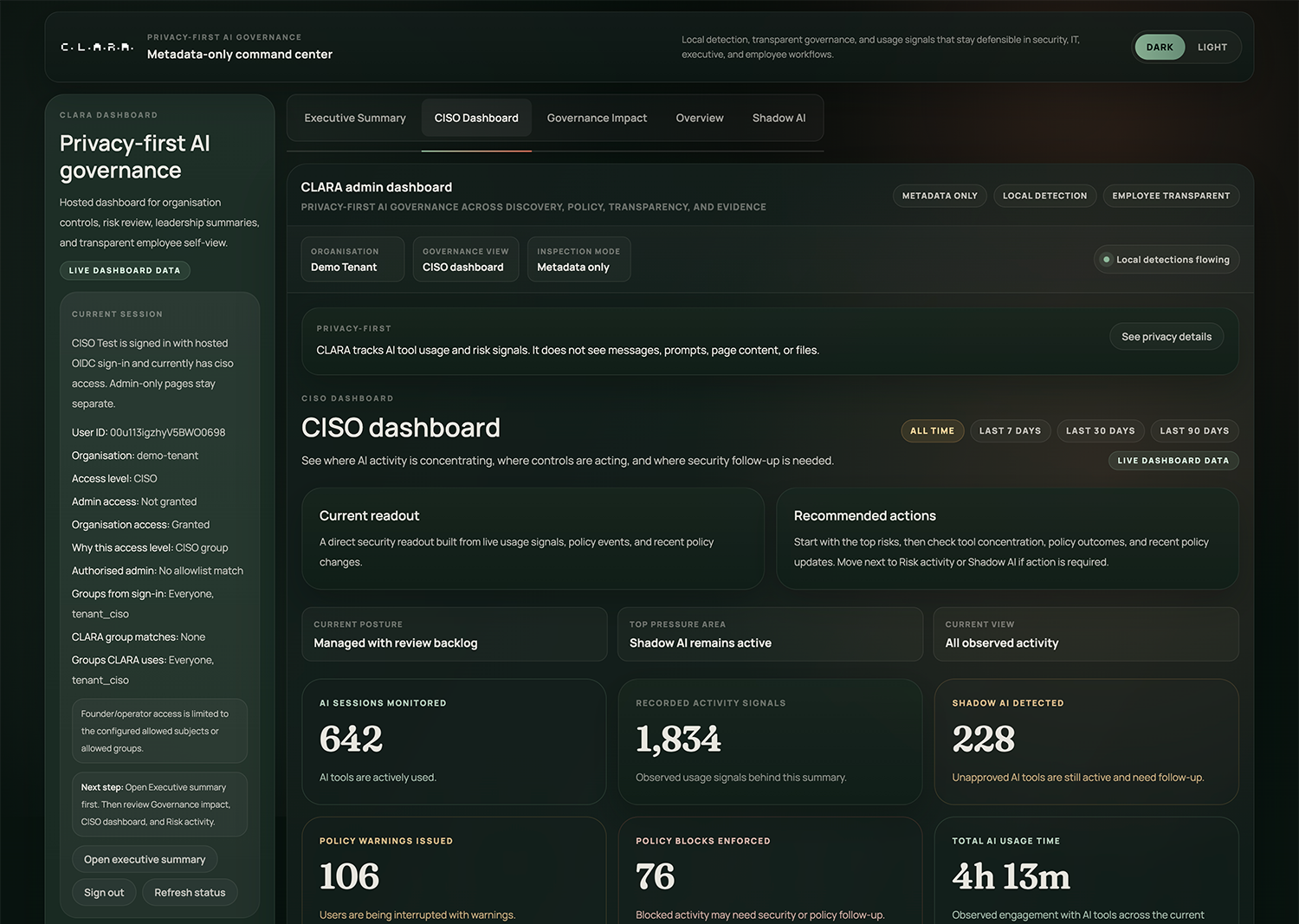

Focused product views from the pilot environment across governance posture, policy control, audit evidence, and employee transparency.

Security teams can move from uncertainty to board-ready control with live visibility into Shadow AI, high-risk activity, and the state of governance coverage.

The control plane brings forward the operating layer that matters most in each screen: posture, policy, evidence, and employee transparency. Open any view for the full original product screen.

C.L.A.R.A. is governance-first, not employee surveillance. The system is built so organisations can prevent risk, surface Shadow AI, and preserve trust at the same time.

All pattern matching runs locally in the browser. The backend receives structured event metadata only. That architecture is the privacy guarantee and the governance model.

C.L.A.R.A. includes an employee self-view so people can see what the system records and what it never reads. Trust is part of deployment, not a footnote for legal review.

Detection categories are enforced locally in the browser and resolved into governance outcomes. The platform remains precise without becoming invasive.

Policy can intervene when sensitive documents are pushed toward external AI tools.

High-confidence credential patterns can trigger immediate real-time prevention.

Engineering activity can be governed without capturing the source itself.

Personally identifiable information patterns can route users toward safer approved tools.

Controls can stop regulated data classes before they move into public model contexts.

Local inspection recognises cloud secret patterns and enforces immediate policy.

Large transfers into AI interfaces can be checked for policy relevance before submission.

Structured indicators help prevent large-scale exposure without storing the underlying data.

Unknown tools surface quickly so administrators can review, approve, or block them.

Enterprise-specific markers can support governance policy without reading the work itself.

C.L.A.R.A.'s dashboard is multi-tenant, role-aware, and polished across security, IT, compliance, finance, and employee transparency workflows.

Unknown tools, blocked tools, and approved environments stay visible in one governance view.

| Tool | Status | Risk label | Usage frequency |

|---|---|---|---|

| ChatGPT Enterprise | Approved | Managed | High |

| Public chatbot | Blocked | Open data risk | Medium |

| Code assistant extension | Review | Engineering review | Emerging |

| Design AI tool | Approved | Creative workflow | Moderate |

| Unknown finance tool | Review | Spend and data review | Low |

Shadow AI discovery is not a side report. It is an operational surface for understanding where governance must act first.

Security and IT can convert unknown tools into approved, warned, or blocked states without changing the detection architecture underneath.

Policies can be shaped by role, team, and approved workflow without turning the system into an unreadable rules engine.

Warn and block flows are functioning, persistent, and designed to create clear policy moments that administrators can explain later.

Adoption is increasing in approved environments. Unknown tool usage is concentrated in a small set of teams. Critical events are low volume and policy coverage is improving.

Approved tool usage is growing with governance, not around it. That is the operational proof point leadership needs.

Spend visibility, policy evidence, and audit readiness live in the same operating surface instead of separate reporting conversations.

Employees can inspect their own governance record, understand policy boundaries, and see that the platform is designed to avoid surveillance.

C.L.A.R.A. gives each stakeholder the signal they need without turning governance into a surveillance programme or a reporting burden.

Shadow AI, credential leakage, unmanaged external tools, and unverifiable control claims.

Real-time local detection, enforceable policy, and structured evidence that controls were actually applied.

Security teams need a trustable control surface before AI usage becomes impossible to unwind.

Rollout complexity, unmanaged tool sprawl, and requests for visibility that create support burden.

A lightweight extension, centralised policy, and role-aware dashboards that fit standard enterprise administration.

IT becomes the operating layer between enthusiastic adoption and board-level accountability.

Duplicate licences, unmanaged procurement, unapproved external usage, and budget drift without clear value.

Visibility into tool adoption posture and the governance context needed to rationalise spend.

Finance is increasingly expected to judge AI exposure, not just software cost.

Regulated data movement, employee trust, audit readiness, and systems that gather more than they should.

Privacy-by-design architecture, transparent evidence records, and governance without prompt or content capture.

The governance system itself must be defensible, not merely the policy it claims to enforce.

The company learns what it needs to govern AI use without reading employee work. That distinction is the product line C.L.A.R.A. refuses to cross.

We work with select organisations that need a credible AI governance layer before AI usage, policy pressure, and audit requirements outpace existing controls.

Focused deployment to a defined user group with Shadow AI discovery, policy review, and live warn or block flows.

Implementation guidance for IT, governance calibration for security and compliance, and employee transparency framing from day one.

Leadership-ready summary of usage posture, policy outcomes, and next-stage governance recommendations.

We received your request. The C.L.A.R.A. team will contact you within 24–48 hours.

C.L.A.R.A. is already credible in pilot mode. The roadmap extends that foundation rather than replacing it.

This is a category-creation opportunity at the intersection of security infrastructure, governance software, and enterprise AI adoption.

Privacy-first architecture is not a brand position. It is a structural moat. Behavioural telemetry compounds the more enterprises govern AI at the edge. The result is a platform that can define how serious organisations adopt AI without crossing into surveillance.

C.L.A.R.A.'s metadata-only, local-detection model is difficult to replicate cleanly once competitors have built around content capture and surveillance assumptions.

Behavioural telemetry across tools, policy states, and enterprise roles becomes more valuable as governance matures across customers.

C.L.A.R.A. is not a thin analytics layer. It is a new operating category for AI governance without surveillance.

The product sits directly inside enterprise conversations about risk, spend, control, and defensible adoption. That is where enduring platforms are built.